OKLAHOMA CITY — A body camera captured every word and bark uttered as police Sgt. Matt Gilmore and his K-9 dog, Gunner, searched for a group of suspects for nearly an hour.

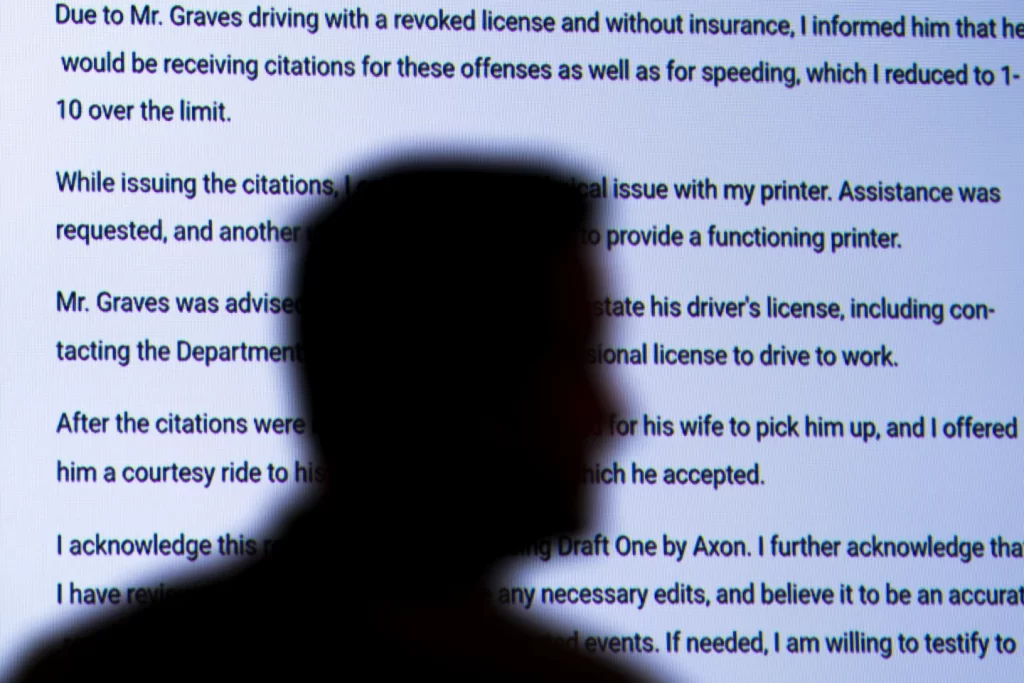

Normally, the Oklahoma City police sergeant would grab his laptop and spend another 30 to 45 minutes writing up a report about the search. But this time he had artificial intelligence write the first draft.

Pulling from all the sounds and radio chatter picked up by the microphone attached to Gilmore’s body camera, the AI tool churned out a report in eight seconds.

“It was a better report than I could have ever written, and it was 100% accurate. It flowed better,” Gilmore said. It even documented a fact he didn’t remember hearing — another officer’s mention of the color of the car the suspects ran from.

Oklahoma City’s police department is one of a handful to experiment with AI chatbots to produce the first drafts of incident reports. Police officers who’ve tried it are enthused about the time-saving technology, while some prosecutors, police watchdogs and legal scholars have concerns about how it could alter a fundamental document in the criminal justice system that plays a role in who gets prosecuted or imprisoned.Built with the same technology as ChatGPT and sold by Axon, best known for developing the Taser and as the dominant U.S. supplier of body cameras, it could become what Gilmore describes as another “game changer” for police work.AI is learning from what you said on Reddit, Stack Overflow or Facebook. Are you OK with that?

“They become police officers because they want to do police work, and spending half their day doing data entry is just a tedious part of the job that they hate,” said Axon’s founder and CEO Rick Smith, describing the new AI product — called Draft One — as having the “most positive reaction” of any product the company has introduced.

“Now, there’s certainly concerns,” Smith added. In particular, he said district attorneys prosecuting a criminal case want to be sure that police officers — not solely an AI chatbot — are responsible for authoring their reports because they may have to testify in court about what they witnessed.

“They never want to get an officer on the stand who says, well, ‘The AI wrote that, I didn’t,’” Smith said.

AI technology is not new to police agencies, which have adopted algorithmic tools to read license plates, recognize suspects’ faces, detect gunshot sounds and predict where crimes might occur. Many of those applications have come with privacy and civil rights concerns and attempts by legislators to set safeguards. But the introduction of AI-generated police reports is so new that there are few, if any, guardrails guiding their use.

Concerns about society’s racial biases and prejudices getting built into AI technology are just part of what Oklahoma City community activist aurelius francisco finds “deeply troubling” about the new tool, which he learned about from The Associated Press. francisco prefers to lowercase his name as a tactic to resist professionalism.

“The fact that the technology is being used by the same company that provides Tasers to the department is alarming enough,” said francisco, a co-founder of the Foundation for Liberating Minds in Oklahoma City.

He said automating those reports will “ease the police’s ability to harass, surveil and inflict violence on community members. While making the cop’s job easier, it makes Black and brown people’s lives harder.”

Before trying out the tool in Oklahoma City, police officials showed it to local prosecutors who advised some caution before using it on high-stakes criminal cases. For now, it’s only used for minor incident reports that don’t lead to someone getting arrested.

“So no arrests, no felonies, no violent crimes,” said Oklahoma City police Capt. Jason Bussert, who handles information technology for the 1,170-officer department.

That’s not the case in another city, Lafayette, Indiana, where Police Chief Scott Galloway told the AP that all of his officers can use Draft One on any kind of case and it’s been “incredibly popular” since the pilot began earlier this year.

Or in Fort Collins, Colorado, where police Sgt. Robert Younger said officers are free to use it on any type of report, though they discovered it doesn’t work well on patrols of the city’s downtown bar district because of an “overwhelming amount of noise.”

Along with using AI to analyze and summarize the audio recording, Axon experimented with computer vision to summarize what’s “seen” in the video footage, before quickly realizing that the technology was not ready.

“Given all the sensitivities around policing, around race and other identities of people involved, that’s an area where I think we’re going to have to do some real work before we would introduce it,” said Smith, the Axon CEO, describing some of the tested responses as not “overtly racist” but insensitive in other ways.

Those experiments led Axon to focus squarely on audio in the product unveiled in April during its annual company conference for police officials.

The technology relies on the same generative AI model that powers ChatGPT, made by San Francisco-based OpenAI. OpenAI is a close business partner with Microsoft, which is Axon’s cloud computing provider.

“We use the same underlying technology as ChatGPT, but we have access to more knobs and dials than an actual ChatGPT user would have,” said Noah Spitzer-Williams, who manages Axon’s AI products. Turning down the “creativity dial” helps the model stick to facts so that it “doesn’t embellish or hallucinate in the same ways that you would find if you were just using ChatGPT on its own,” he said.

Axon won’t say how many police departments are using the technology. It’s not the only vendor, with startups like Policereports.ai and Truleo pitching similar products. But given Axon’s deep relationship with police departments that buy its Tasers and body cameras, experts and police officials expect AI-generated reports to become more ubiquitous in the coming months and years.

Before that happens, legal scholar Andrew Ferguson would like to see more of a public discussion about the benefits and potential harms. For one thing, the large language models behind AI chatbots are prone to making up false information, a problem known as hallucination that could add convincing and hard-to-notice falsehoods into a police report.

“I am concerned that automation and the ease of the technology would cause police officers to be sort of less careful with their writing,” said Ferguson, a law professor at American University working on what’s expected to be the first law review article on the emerging technology.

Ferguson said a police report is important in determining whether an officer’s suspicion “justifies someone’s loss of liberty.” It’s sometimes the only testimony a judge sees, especially for misdemeanor crimes.

Human-generated police reports also have flaws, Ferguson said, but it’s an open question as to which is more reliable.